Data reusability: The next step in the evolution of analytics

Data reusability will lessen the response time to emerging opportunities and risks, allowing organisations to remain competitive in the digital economies of the future.

- If data's meaning can be defined across an enterprise, the insights that can be derived from it expand exponentially

- When financial institutions work together to identify useful data analytics solutions they can produce great results and add a lot of value to their customers

- The analytic systems of tomorrow should be able to take the same data set and process them without modifying them

Data reusability: The next step in the evolution of analytics 2017-07-25T16:36:19+00:00

Editor's Note: This article originally appeared on The Asian Banker on July 20, 2017.

By Keith Furst and Daniel Wagner

Data reusability will lessen the response time to emerging opportunities and risks, allowing organisations to remain competitive in the digital economies of the future.

- If data's meaning can be defined across an enterprise, the insights that can be derived from it expand exponentially

- When financial institutions work together to identify useful data analytics solutions they can produce great results and add a lot of value to their customers

- The analytic systems of tomorrow should be able to take the same data set and process them without modifying them

If data is the new oil, then many of the analytical tools being used to value data require their own specific grade of gasoline, akin to needing to drive to a particular gas station with a specific grade of gasoline with only one such gas station within a 500-mile radius. It sounds completely ridiculous and unsustainable, but that is how many analytical tools are set up today.

Many organisations have data sets that can be used with a myriad of analytical tools. Financial institutions, for example, can use their customer data as an input to determine client profitability, credit risk, anti-money laundering compliance, or fraud risk. However, the current paradigm for many analytical tools requires that the data to be used must conform to a specific model in order to work. That is often like trying to fit a square peg in a round hole, and there are operational costs associated with maintaining each custom-built tunnel of information.

The advent of big data has opened up a whole host of possibilities in the analytics space. By distributing workloads across a network of computers, complex computations can be performed on numerous data at a very fast pace. For information-rich and regulatory-burdened organisations such as financial institutions, this has value, but it doesn’t address the wasteful costs associated with inflexible analytic systems.

What are data lakes?

The "data lake" can provide a wide array of benefits for organisations, but the data that flows into the lake should ideally go through a rigorous data integrity process to ensure that the insights produced can be trusted. The data lake is where the conversation about data analytics can shift from what it really ought to be.

Data lakes are supposed to centralise all the core data of the enterprise, but if each data set is replicated and slightly modified for each risk system that consumes it, then the data lake’s overall value to the organisation becomes diminished. The analytic systems of tomorrow should be able to take the same data set and process them without modifying them. If any data modifications are required it could be handled within the risk system itself.

That would require robust new computing standards, but at the end of the day, a date is still a date. Ultimately, it doesn’t matter what date format convention is being followed because it represents the same concept. While there may be a need to convert data to a local date-time in a global organisation, some risk systems enforce date format standards which may not align with the original data set. This is just one example of pushing the responsibility of data maintenance on the client as opposed to handling a robust array of data formats seamlessly in-house.

The conversation needs to shift to what data actually means, and how it can be valued. If data’s meaning can be defined across the enterprise, the insights that can be derived from it expand exponentially. The current paradigm of the data model in the analytics space pushes the maintenance costs onto the organisations which use the tools, often impeding new product deployment. With each proposed change to an operational system, risk management systems end up adjusting their own data plumbing in order to ensure that they don’t have any gaps in coverage.

Data analytics solutions add value

When financial institutions work together to identify useful data analytics solutions they can produce great results and add a lot of value to their customers. The launch of Zelle is a perfect example, since customers from different banks can now send near real-time payments directly to one another using a mobile app.

A similar strategy should be used to nudge the software analytics industry in the right direction. If major financial institutions banded together with economies of scale to create a big data consortium where one of the key objectives was to make data reusable, then the software industry would undoubtedly create new products to accommodate it, and data maintenance costs would eventually go down. Ongoing maintenance costs would eventually migrate from financial institutions to the software industry, which has the operational and cost advantages.

There are naturally costs associated with managing risk effectively, but wasteful spending on inflexible data models takes money away from other things and stymies innovation. US regulators are notoriously aggressive when it comes to non-compliance, so reducing costs in one area could encourage investment into other areas, and ultimately strengthen the risk management ecosphere. Making data reusable and keeping its natural format would also increase data integrity and quality, and improve risk quantification based on a given model’s approximation of reality.

Reusable data will allow institutions to have a "first mover advantage"

Is the concept of reusable data too far ahead of its time? Not for those who use it, need it, and pay for it. Clearly, the institution(s) that embrace the concept will have the first mover advantage, and given the speed with which disruptive innovations are proceeding, it would appear that this is an idea whose time has come. As the world moves more towards automation and digitisation it is becoming increasingly clear that the sheer diversity and sophistication of risks makes streamlining processes and costs a daunting organisational task.

The speed at which organisations must react to risks in order to remain competitive, cost-efficient and compliant is decreasing, while response times are increasing, right along with a plethora of inefficiencies. Being in a position to recycle data for risk and analytics systems would decrease response times and enhance overall competitiveness. Both will no doubt prove to be essential components of successful organisations in the digital economies of the future.

Keith Furst is founder and financial crimes technology expert of Data Derivatives; and Daniel Wagner is managing director of Risk Cooperative.

How the 2016 United States Presidential Election Redefined Risk Management for the Better

The results of the 2016 United States Presidential election were shocking to some due to the polling statistics and the mainstream media's narrative around the highly probable election outcome which turned out to be wrong.

The results of the 2016 United States Presidential election were shocking to some due to the polling statistics and the mainstream media's narrative around the highly probable election outcome which turned out to be wrong.

No matter what you think of Trump and the final the result of the election it will be studied for generations because it defied conventional wisdom and members of the mainstream media asserted it would be a long shot for Trump to pull off a victory. Ultimately, there was an industry wide systematic failure in the way the polling models were designed and executed. So how did an obscure Professor from Stony Brook prove the big polling companies wrong?

Professor Helmut Norpoth's Primary Model

On March 7, 2016 Professor Norpoth predicated that Donald Trump would defeat Hillary Clinton or Bernie Sanders with a confidence level of 87% or 99% respectively. Professor Norpoth turned out to be right and proved that many of the major polling and news organizations were just flat out wrong. Well, he didn’t get everything right because his model predicated Trump would win the popular vote which turned out to be false, but Trump did win the Electoral College.

Professor Norpoth’s primary model is based on two major inputs which are the results of election primaries and the “swinging of the pendulum” or the tendency for the White House party to change after two consecutive terms. In an interview with Fox News Professor Norpoth described that since Barack Obama didn’t do as well getting reelected as he did getting elected it was a strong indication that the 2016 Presidential race would be a swing election. In other words, the momentum was already in favor of Republicans based on historical data regardless of the nominee.

What does it mean to risk management?

According to ISO 31000, risk is defined as the “effect of uncertainty on objectives”. Hence, risk management is attempting to manage uncertainty and the way this is done across many sectors of the economy is to build quantitative models. The results of the 2016 US Presidential election are a solemn reminder of one crucial, but seemingly easy to forget fact. The models we build are only approximations of reality, but not reality itself. But what happens when an entire industry (pollsters) essentially rely on the same fundamental model without making any modifications based on new information?

The map is not the territory

Alfred Korzybski was a Polish scholar who famously said that “the map is not the territory.” Korzybski was highlighting the fact that a map is really an abstraction of the territory and while it may be useful to us it is not the territory itself. This is an obvious, but perhaps blurry distinction as our reliance and dependence on technology continues to grow.

Have you ever had the experience of driving to an address under the guidance of some type of global positioning system (GPS) system and it took you to the wrong location? While it may have been an inconvenience at the time it illustrates our reliance on models and algorithms and the high expectations we set for 100% accuracy all of the time.

Is there really strength in numbers?

Two common questions which are asked from a financial institution's senior leadership to consultants implementing regulatory compliance systems are:

- How are other banks doing it?

- What is the industry standard?

These are actually both really good questions which the senior leadership should be asking their consultants and peers at other financial institutions. It's the idea that the collective intelligence of a diverse group of practitioners will sum up to a more comprehensive and accurate model than any one financial institution can come up with by itself. There is merit in this notion, but what if the collective gets it wrong because of complacency, inadaptability and a general banking culture which discourages dissenting opinions? If risk management is truly about managing uncertainty then isn't there an inherent risk with a strict adherence to an industry standard? Or should financial institutions meet their regulatory expectations and adhere to industry standards, but simultaneously strive to identify limitations in their current models and create a culture which embraces innovation and creativity?

Why Banks Must Evolve their AML Process to Manage Micro-Jurisdictional Risk

United States sanctions policies have evolved over the years, from country-wide embargos to more nuanced approaches targeting specific entities. According to Jacob J. Lew, Secretary of the Treasury, the sanctions implemented today are more focused on bad actors while trying to limit the negative externalities. Lew described his view on the traditional sanctions model at the Carnegie Endowment for International Peace which is eloquently summarized by this quote:

The Evolution of Sanctions

United States sanctions policies have evolved over the years, from country-wide embargos to more nuanced approaches targeting specific entities. According to Jacob J. Lew, Secretary of the Treasury, the sanctions implemented today are more focused on bad actors while trying to limit the negative externalities. Lew described his view on the traditional sanctions model at the Carnegie Endowment for International Peace which is eloquently summarized by this quote:

Lew went on to describe how the application of sanctions has evolved over the years, and through better financial intelligence, strategy, and design, the new model can home in directly on bad actors:

The Evolution of US Trade Sanctions

The diagram below illustrates the evolution of US Trade Sanctions. Longstanding sanctions would generally indicate a country-wide embargo, as is the case for Cuba and Iran. However, the Cuban and Iranian sanctions policies are currently in transition, as the traditional sanctions model is becoming deemphasized and would now only be used in extreme circumstances such as the case with North Korea. Sanctions imposed on African countries in 2005-2006 as highlighted in the diagram below were targeted sanctions based on human rights violations, concerns of regional stability or entities involved in undermining the democratic process.

Jurisdictional Risk and AML Systems

The evolution of sanctions highlights how US regulators perceive risk. In the case of the African countries outlined above, sanctions were imposed to prevent the flow of money to the groups and individuals participating in local conflicts. The sanctions imposed were designed to prevent or at least slow down human rights violations. The question is, could this same perception of situational and jurisdictional risk be applied to anti-money laundering (AML) systems?

The Traditional AML Model

Cross-border transfers could be monitored based on a number of factors, but generally models are comprised of three main elements:

- Frequency: The number of transfers which occurred within a specific time period. Example: 3 transfers over a rolling 7 day time period.

- Amount: The value of each transfer. Example: A minimum threshold of X dollars could be configured in the monitoring system. Other elements would include round dollar amounts and amounts just below minimum reporting requirements.

- Jurisdictional risk: The risk level or score associated with each country. Example: Transfers flowing through high-risk jurisdictions would generally receive greater scrutiny than similar transactions which only flowed through low-risk jurisdictions based on the financial institution’s country risk ranking.

Financial Crimes Enforcement Network (FinCEN) Regulatory Guidance

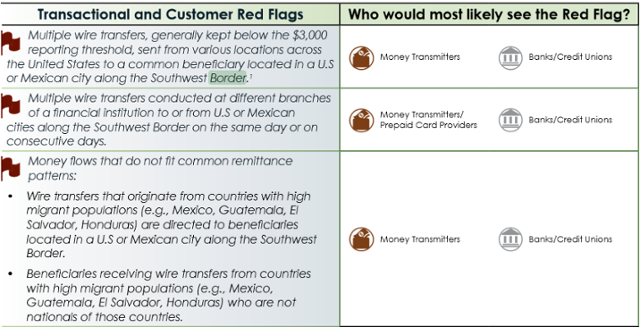

On September 11, 2014 Financial Crimes Enforcement Network (FinCEN) released an advisory guide called “Guidance on Recognizing Activity that May be Associated with Human Smuggling and Human Trafficking – Financial Red Flags” which was intended to help financial institutions identify human trafficking red flags. The interesting part is that FinCEN specifically identified both US and Mexican cities on the Southwest border as areas which require additional scrutiny as described in the transactional and customer red flags listed below:

Source: https://www.fincen.gov/statutes_regs/guidance/pdf/FIN-2014-A008.pdf

Micro-Jurisdictions: The Southwest Border

The image of the Southwest border below illustrates that there are multiple cities in the United States and Mexico that would fall within FinCEN’s advisory.

Micro-Jurisdictions: The Tri-Border Area (TBA)

The Tri-Border area is the region where the borders of Brazil, Argentina, and Paraguay meet, which has been known as a criminal haven for organized crime groups, terror groups and drug traffickers. In a report titled “TERRORIST AND ORGANIZED CRIME GROUPS IN THE TRI-BORDER AREA (TBA) OF SOUTH AMERICA” the author describes various conditions in the Tri-Border Area (TBA) that are very favorable to organized crime and terror groups in the below quote:

The three cities which are highlighted in the report are Ciudad de Este, Foz do Iguaçu and Puerto Iguazú because of their proximity to all three countries’ borders.

Challenges of natural language processing (NLP)

Traditional AML systems usually have functionality to support a list of country codes which can be segmented into categories such as low, medium, high, and very high risk. As a result, transactions which flow through higher risk jurisdictions should, in theory, receive greater scrutiny than lower risk transactions. Financial institutions usually store country codes in various systems based on the International Organization for Standardization (ISO) standard (ISO 3166-1) which can be represented in three formats:

- ISO 3166-1 alpha-2: Two-letter country codes.

- ISO 3166-1 alpha-3: Three-letter country codes.

- ISO 3166-1 numeric: Three-digit country codes.

The guidance issued by FinCEN adds another layer of complexity to AML systems because the red flags are not contained in a predefined list of country codes, but in a free text field such as a city, which creates a number of challenges.

The image below shows an address in Ciudad Juarez. Mexico and many financial institutions will have similar field definitions to capture addresses in various systems for client on-boarding or to facilitate a transaction. The only field which is based on a predefined list is the country code and sometimes the state/province, but most of the other fields are free text. However, some financial institutions will attempt to validate the address based on the information given, but not all organizations have the capacity to do so.

If most traditional AML models rely on country codes as a key attribute to determine suspicious activity, then how will a free text city be handled?

The Micro-Jurisdiction AML Model

Essentially, the FinCEN advisory is pointing to an extension of the traditional AML model where one of the additional attributes in the model would be a micro-jurisdiction. The main three components (Frequency, Amount and Jurisdictional risk) of the traditional AML model would still apply, but a fourth attribute would need to be added to the model and calibrated accordingly.

- Frequency: The number of transfers which occurred within a specific time period. Example: 3 transfers over a rolling 7 day time period.

- Amount: The value of each transfer. Example: A minimum threshold of X dollars could be configured in the monitoring system. Other elements would include round dollar amounts and amounts just below minimum reporting requirements.

- Jurisdictional risk: The risk level or score associated with each country. Example: Transfers flowing through high-risk jurisdictions would generally receive greater scrutiny than similar transactions which only flowed through low-risk jurisdictions based on the financial institution’s country risk ranking.

- Micro-Jurisdictional risk: The risk level or score associated with specific towns, zip codes or cities. Example: Transfers flowing through high-risk micro-jurisdictions would generally receive greater scrutiny than a similar transaction which only flowed through low-risk micro-jurisdictions based on the financial institution’s risk ranking.

The Implications of Micro-Jurisdictional Risk

If regulators continue to issue guidance which highlights specific geographic areas as part of a money laundering typology, then traditional AML systems must evolve or engage third party vendors which can process large volumes of data quickly, and without impacting the daily operations of the financial institution’s overall AML program. Another question which comes to mind is to what extent financial institutions should be proactive?

Should financial institutions begin to gather their own intelligence to identify potential high-risk micro-jurisdictions or should they wait for advisories from their respective regulators? The amount of intelligence gathered could be relative to the financial institution’s risk appetite and geographic footprint.

Traditional AML systems may not be the optimal solution to process large volumes of data to extract towns, zip codes and cities from free text fields. Hence, using a third party solution based on the latest advances in computing technology that can cleanse, prepare and risk rate the data before it is sent to the traditional AML systems would be beneficial to financial institutions.

Identifying and monitoring high-risk micro-jurisdictions could extend a financial institution’s AML program’s sophistication by focusing on the more granular attributes of transactional activity and consequently pushing the risk-based approach to new horizons.